Surviving Epic MegaJam or the best way to celebrate a wedding anniversary

This posts contains spoilers for the game! If you have a VR headset, go play it!

We are Dmitry Molchanov and Julia Molchanova. We have been doing AI research since 2014 and have left our jobs on 2021 New Year’s eve to become indie VR game developers. We have no background in anything gamedev-related, so we had to learn everything from scratch. We have been binge watching different courses on Udemy, Unreal Academy and YouTube, learning Unreal Engine and Blender, and making some tech demos as learning projects.

A month and a half ago we started playing around with Gorilla Tag-style locomotion and became completely hooked on it.

Although we didn’t have any gamedev-related background, our previous work in academia did give us a serious advantage. We were completely used to crunch, were pretty fast at prototyping and somehow making things work, and were good at scoping short projects for tight deadlines. You could call us crunch junkies, crunch makes us high and finally feel alive. This comes with a downside of course: we can’t really work without crunching and our code is terrible.

We also happened to have the 7th wedding anniversary during the jam. Can’t think of a better way to celebrate than to do the jam together.

Preparations for the jam

We wanted to experience the full stack of making a small game before entering the Epic MegaJam. We expected that we would encounter some roadblocks on every single step, and figured it would be a good idea to overcome them beforehand. So we entered a small week-long jam and made a small game. As expected, we learned a TON and would not be able to complete our current submission if the MegaJam was our first game jam.

We want to make VR games, so “Is this real life” category was an obvious target. “NVisionary environments” are too tough to pull off in VR, especially targeting Oculus Quest. Sound is our weakest side, so “Conductor” was out of the question too. So, given there are only two of us, we have decided to target “Is this real life”, “Tiny award” and “Procedural magicians”.

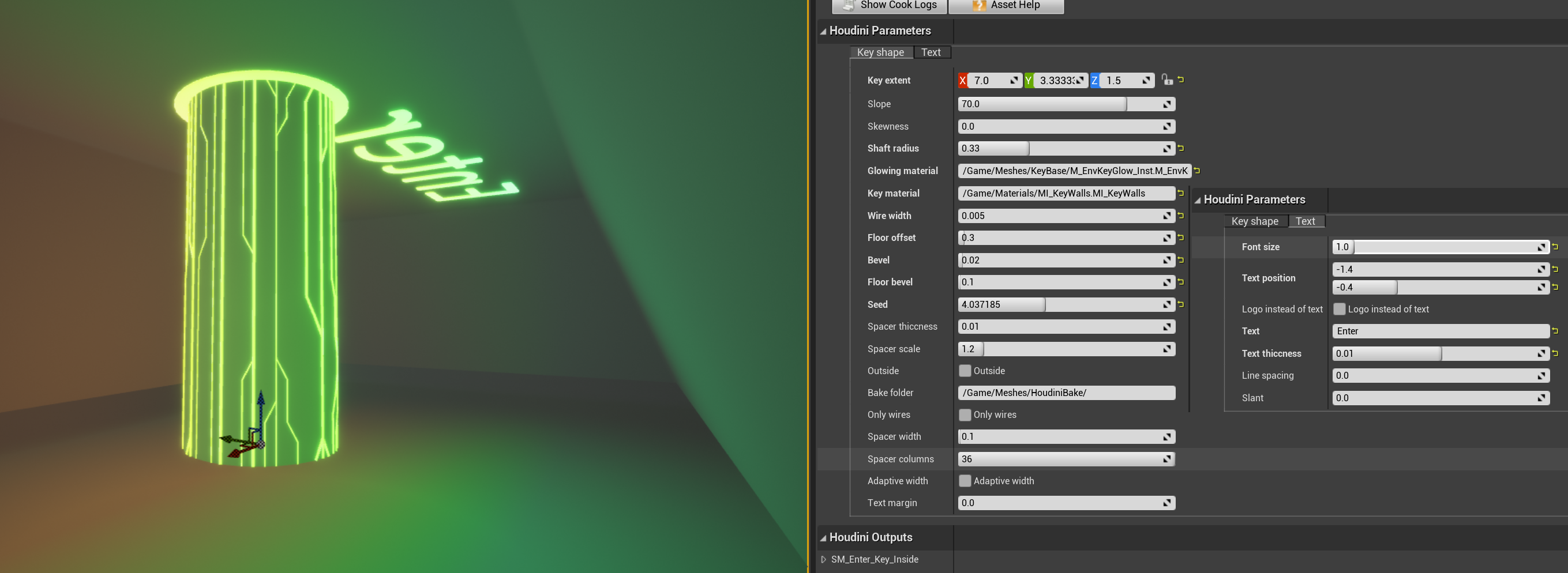

We had to learn Houdini from scratch, and we only had a week until the start of the jam. Houdini Kitchen was a godsend, we have completed the whole course in three days, reproducing most of the tutorials in Houdini. Also, to get familiar with Houdini Engine, we have reimplemented the platform tool from the previous year’s Procedural Magician.

Finally, we have studied the winning titles from the last year, and listened closely to the discussions during the stream where the winners have been announced. It sounded like the overall completeness and level of polish of the game were among the most important factors. Building a prototype that has a lot of potential for expansion seemed to give bonus points too. These lessons heavily informed our decisions.

We have spent the last day before the jam preparing the template for the project. We have copied the basic VR pawn setup and the locomotion system from our previous game, went through all the project settings to optimize rendering for mobile VR and optimize packaging. We have also disabled all the plugins except VR plugins and several other essentials. We also made sure that a shipping build of the template runs as expected both on PCVR and Oculus Quest 2. We also had to set up a shared DDC since one of our PCs is a potato. It is crucial to do all this setup before the jam since changing many of the project settings requires all shaders to be recompiled. Of course, we also set up source control. We use free Azure Repos with Git LFS.

During the jam

Days 1-2. The theme

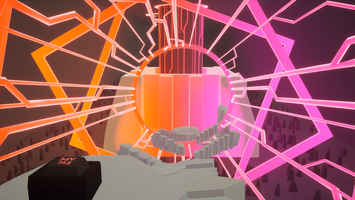

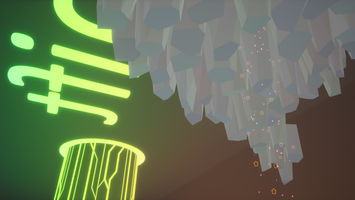

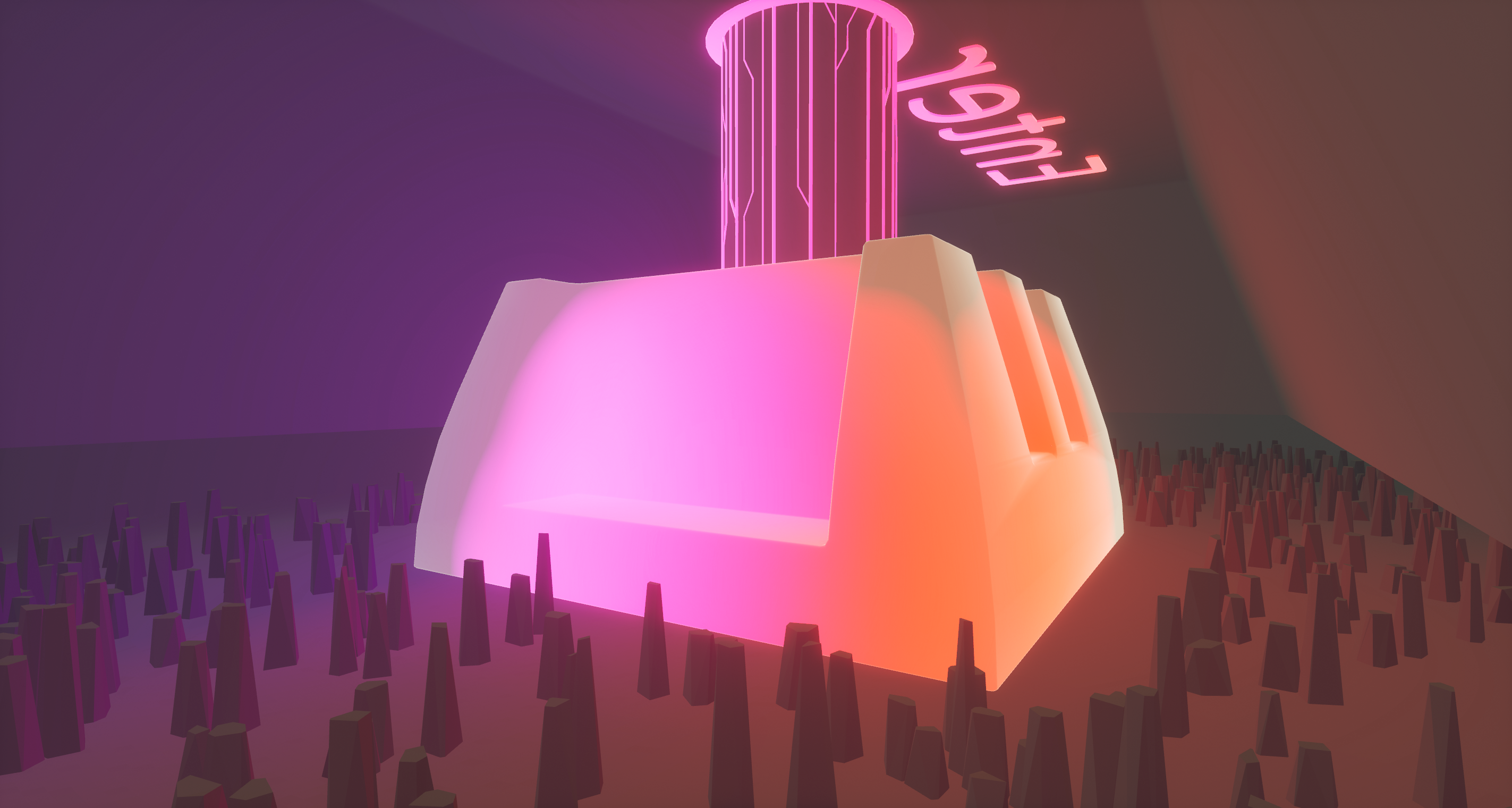

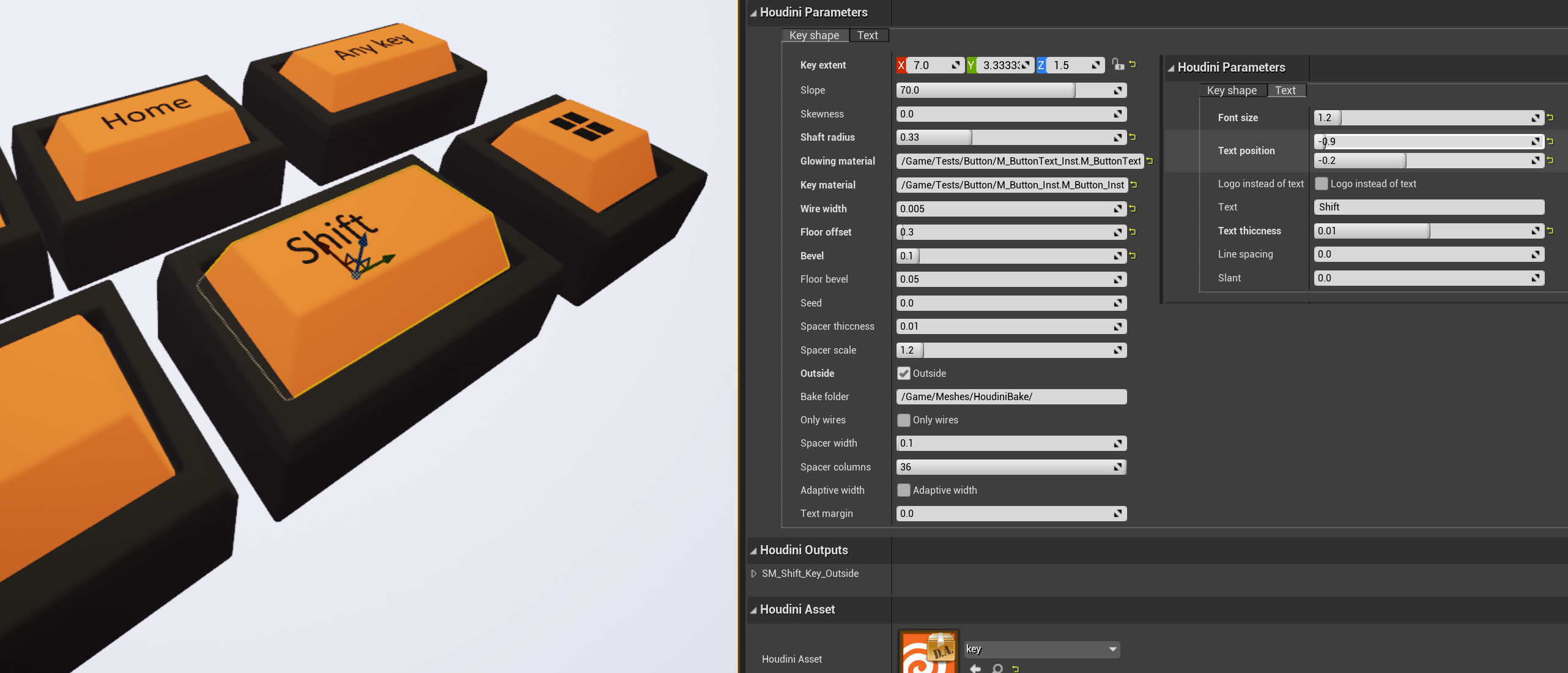

The theme was tough for us. Since we were sitting on a very cool locomotion system, we knew that we had to interpret “running” as the action of movement. The “space” part was much more difficult… “Space” as in “cosmos” is too obvious, it is difficult to imagine running out of a Hilbert space… After a couple of hours we noticed a space bar of an RGB mechanical keyboard and the ideas started flowing. You are a small creature that got trapped inside a keyboard, and needs to run out of the space bar. How did it enter the keyboard? Of course, through the Enter. The RGB lighting seemed like a fun challenge, the change of perspective (being trapped inside a giant key) should work well in VR too. We did not manage to develop the idea fully. Who is the player? Why is he trapped inside a keyboard? What do the platforms look like? Why go to the space bar? But we knew we had to make the inside of an RGB keyboard, so we went to work. A day later we had an HDA tool to procedurally build all kinds of keyboard buttons, and a set of sophisticated shaders to fake dynamic RGB lighting with unlit materials.

So the idea is to travel from the Enter key to the Space key. We wanted each key to have a special thematic twist to it. We have decided to use the Enter key to show off our dynamic RGB lighting, and make a cool startup sequence that lights up the level. Inside the shift key something needs to be shifting, so we came up with propulsion volumes similar to the ones in Daedalus and the excursion funnels in Portal 2. We have also decided to decorate the level with some giant arrows that aimlessly fly (shift) around. The windows key had to have windows, so we planned to make fake portals that display a captured cubemap similar to the one in the portal map of the Oculus example projects. Finally, in the space level we have decided to play with gravity. BTW, turns out it is extremely illegal to use a Windows logo in your game, so we almost got screwed.

Days 3-4. Implementing the mechanics

We needed to implement and test a bunch of different mechanics: propulsion volumes, clickable mechanical keys, portals, checkpoints and custom killboxes, changing the gravity. We also had to keep in mind the limitations of Oculus Quest and make sure everything will run smoothly. To do all that, we have created a separate testing level for each mechanic, and developed and tested them separately. This allowed for very fast iteration since the levels are so small and there are no distractions. We also had a level for stress testing that had a bunch of geometry, particles, portals - all the heavy stuff, to make sure it runs smoothly on the Quest.

A passable idea for lore appeared a couple of days into the jam (you really don’t want to hear earlier ideas). You work at a keyboard factory and this is a new keyboard model to test. You are a tiny worker, and each key has a special layout for you to activate it and assess the results. Naturally you would have very soft hands, suitable for pressing buttons gently. Initially we wanted to go for cartoonish puffy gloves. But after a couple of hours trying to model them (an insane amount of time to spend for nothing during a jam) and being really frustrated, luckily we came up with an idea of cat paws/gloves. They look really soft, cats love keyboards, everyone loves cats, what’s more to wish for.

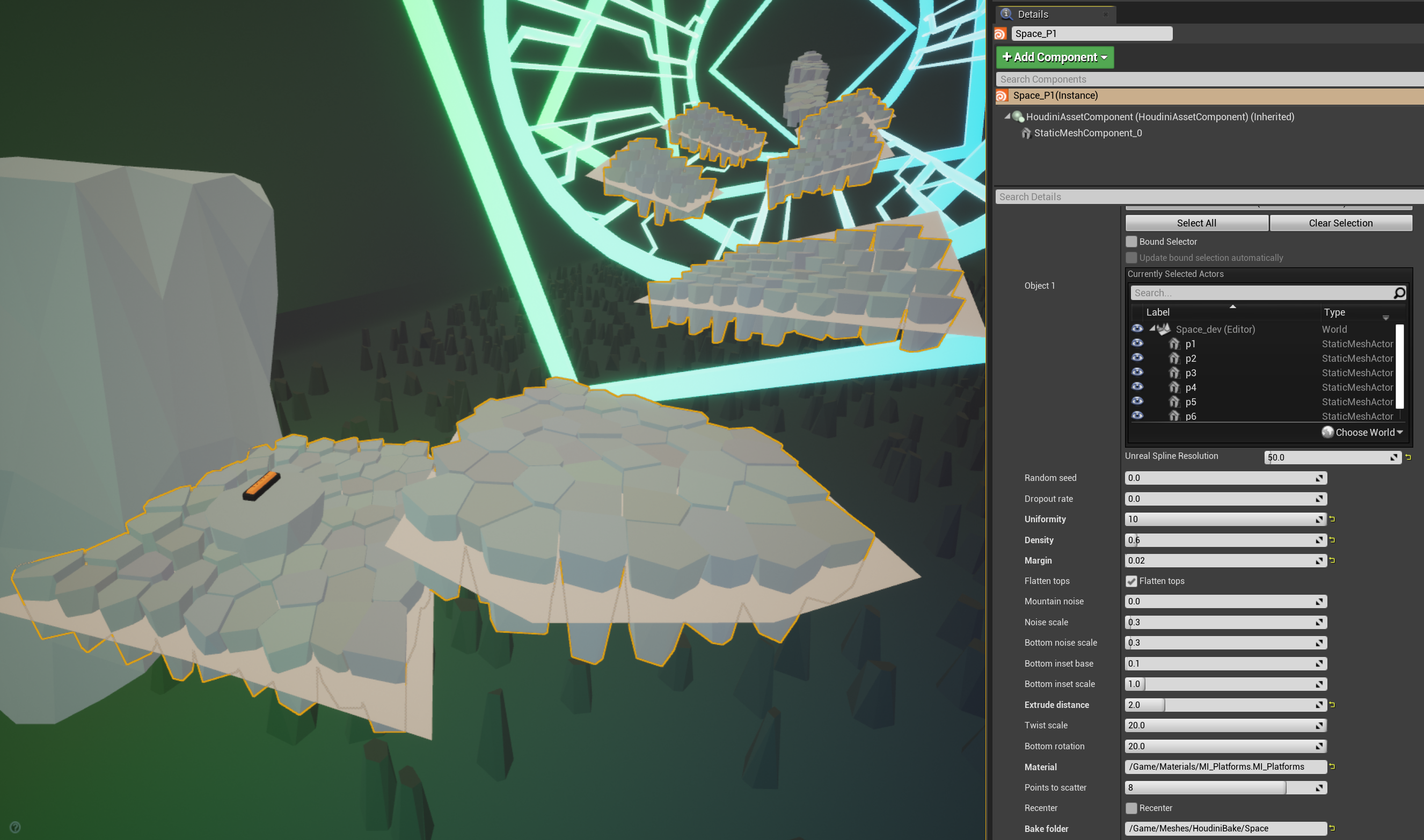

What kind of platforms could a keyboard have? Keyboard keys would be too cheesy, and we went with something that is easy to make, looks cool, but doesn’t necessarily make sense. Take the top surface of blockout geometry, scatter some points, perform Voronoi fracture, and use it as a base to grow out some crystals. We made this into a highly parameterized HDA tool that we used to make most of our platforms. We also made a similar tool to make larger boulders / columns as well. We went for this fractured look because we didn’t want the platforms to look too uniform, and wanted to create details so that the player could feel the movement.

Since we used unlit materials and didn’t want to use any textures, we had to make the crystals slightly different colors to be able to see the edges between them. We faked directional lighting with our unlit shaders to bring out the edges even more. Our level design also reinforces this differentiation: you rarely need to go down because it causes the tops of the platforms to blend together.

Day 5. Level design

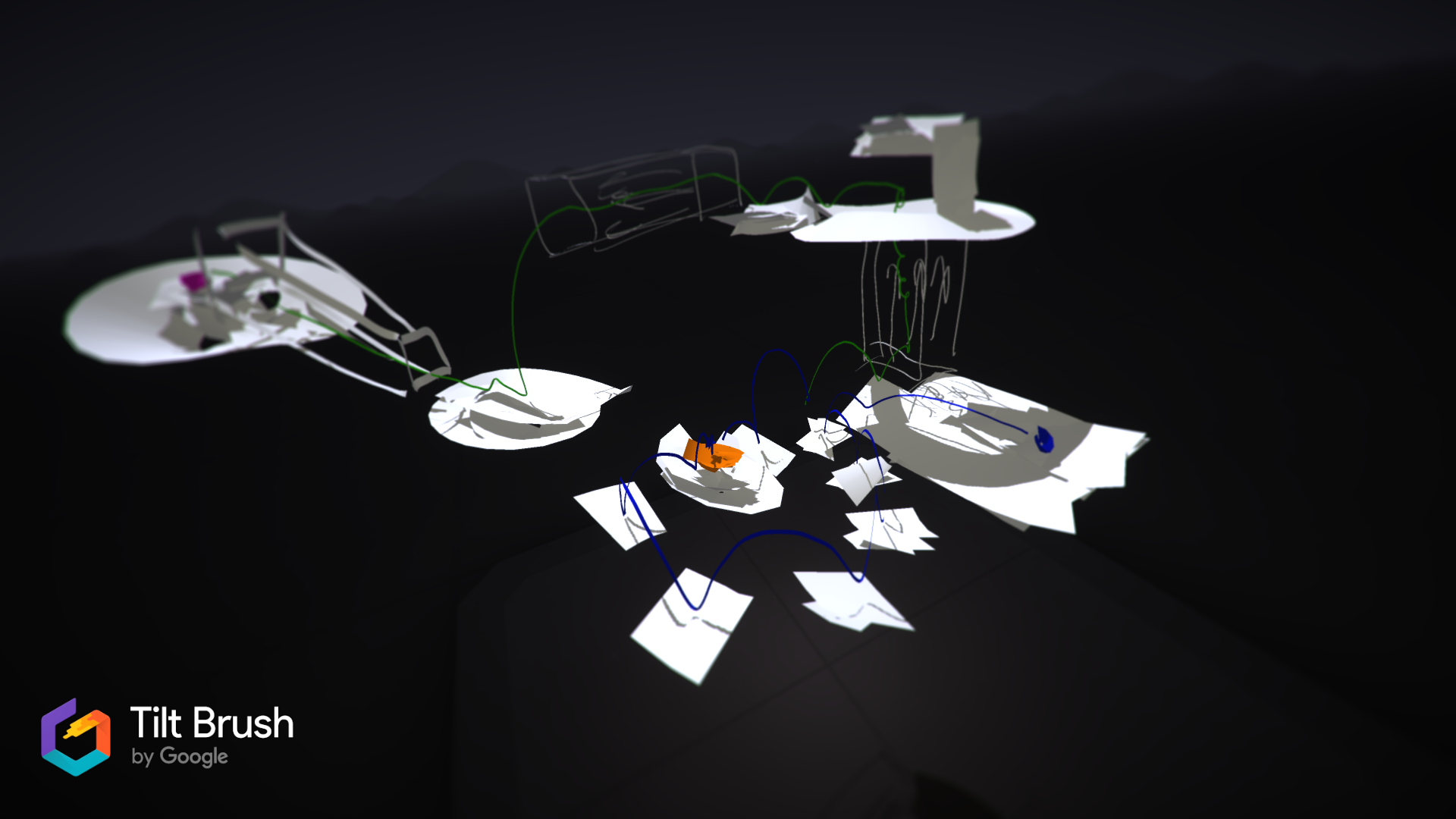

When all the mechanics were ready, we needed to put them all together and create some actual levels for the game. Blocking out a level from scratch is hard, and we found TiltBrush to be incredibly useful to draft a concept first. We then tried to block out the level inside Unreal and convert it to the finished level using our Houdini platform tools, but we had a lot of trouble with BSPs producing broken geometry and being annoying to edit. Instead, we exported the environment to Blender and blocked out the level there (E to extrude, S to scale FTW). Then it was one click to export it back to the Unreal Editor, where the Houdini Engine could convert the meshes to finalized assets. The whole workflow turned out to be very efficient: quickly edit the blockout in Blender, use “Send to Unreal” to update the meshes inside Unreal in one click, and recook the Houdini assets to see the final result. Repeat until the level is done.

We had two kinds of keys in our levels. Orange keys did something to the level, e.g. activated the propulsion volumes or changed gravity, usually stuff that is essential to level completion. Black RBG keys teleported you to the corresponding level (e.g. you need to push the “shift” key to go to the “shift” level). This gave us a lot of ideas: for example, you press the “Esc” key to escape the last level, “Home” brings you “home” and “End” quits the game. We also have an easter egg or two too ;)

We’ve made 3 / 4 levels and still haven’t come up with the ending. In a random flash of inspiration we came up with a punny title, “In space no one can hear you click”, and it immediately changed the mood of the game to something mysterious, surreal. It also meant that the player has to overcome some grave danger at the end of the game. The space level wasn’t just about low gravity anymore. Something goes wrong, the gravity changes and pulls you towards the center of the key with the ever growing force. No one is there to hear you or help you. And there’s no way you can get out of there. Unless.. Unless you try again and escape the space bar in time.

Day 6. Finishing the levels and making audio

Until the 6th day of the jam we thought we wouldn’t be able to do two endings. Implementing this scenario, when the gravity grows overtime and pulls you towards the center of the level, was really challenging. There were many things that could go wrong, it needed lots of playtesting, balance tweaking and a peculiar level design. The last level wasn’t about tricky platforming in low gravity anymore, but more about overcoming a growing force, almost clinging to the platforms at the endpoint. We took that bet and everything played out perfectly. Also, as we have finally set the mood for the game, we knew what kind of music we needed.

How to make an atmospheric soundtrack if you know nothing about sound design? Take a synth (we used Helm), listen to ALL the presets it has, choose a couple that fit your mood and modify them to your taste. Then randomly play something so slow that no one will notice it doesn’t make any sense. Keep doing it until you get something that sounds fine.

The SFX for the key presses were recorded from our mechanical keyboard (note that there is a separate press and release sound, little things like that matter a lot). We have also tried to mimic the force curve of a real mechanical key using haptics. “Set Haptics by Value” did not result in the fine control we wanted, but we managed to noticeably increase the vibration until actuation and disabled the vibration afterwards. Also Oculus and Vive haptics work in completely different ways, so we prioritized Oculus haptics and had to settle for the result.

Day 7. Narration, polish and submission

Regarding narration, there was a tough choice. Initially we wanted the narrator to explain everything to the player. But the new mood changed the game to a surreal experience and we didn’t want to ruin the immersion with excessive narration. Sometimes less is more, and we thought it’d be interesting to let the player make sense of what is happening. Plot is like Swiss cheese, less plot means less plot holes. So, the narrator doesn’t warn the player about anything and doesn’t know what’s going on with the last level. It’s an experimental keyboard, after all.

Polish

Finally time for finishing touches. We added camera and sound fades for death and level transitions. It was a bit tricky to get working on both PCVR and Oculus Quest since the default camera fade utilizes post process effects only available with Mobile HDR (too expensive). We made sure all our checkpoints work as expected. We added a restart button for the (hopefully unlikely) case the player gets stuck without dying. We made the last level easier. We adjusted shaders for standalone Oculus, since for some reason they give a completely different result (darker, muddy look) by default. Tweaked some decorations, added widgets with credits and controls. And, most importantly, we added bloom to the PCVR version. Unfortunately, we did not have the time to properly balance the audio, so the volume is all over the place, but you have to cut something during a jam.

Packaging and submission

We have made shipping builds with “Full rebuild” many times a day, each time we ran the game natively on Oculus Quest, and many times when running the game on the PC. Because of that, the packaging process was extremely smooth, which helped us a lot since we did not have the time to playtest the final build.

Our friend was super kind to test an early Oculus Quest build (4 hours prior to deadline). So, we knew it does install via SideQuest and levels aren’t too hard for a person owning a VR headset for 1.5 days.

We have started recording the gameplay an hour before the deadline and had the video up on YouTube 30 minutes before the deadline. When we first saw the jam submission form, we only had 10 minutes left. Finally, the form is filled, 4 minutes are left, we press the button… and the submission fails: “Invalid Game”. Turns out that editing the itch.io page from two PCs simultaneously is a bad idea and the page went from public back to draft. We were extremely lucky and were able to submit untested but working builds 3 minutes before the deadline.

What went wrong

Surprisingly little! We didn’t have enough time to thoroughly playtest the submitted builds and to decorate the itch page. Also the submission process was a little too stressful with unexpected “Invalid Game” errors 4 minutes before the deadline. Other than that, the process was relatively smooth. We got extremely lucky, because we’ve used up all of our buffer time and any mistake could break our submission. However, this luck was boosted with good preparation and two pairs of eyes constantly double-checking each other’s work.

Rules that worked really well for us

- Release a small finished game beforehand. You do not want to experience the full stack of building a game for the first time during a serious jam like Epic MegaJam. Nailing basic stuff beforehand allows you to experiment with the fun stuff!

- Study the winners, the judging system and the unwritten rules of the game.

- Prepare a good template and have a shipping build ready before the jam.

- If you can’t turn the idea into the complete vision for the game, start doing something, and let the game write itself.

- If you are developing several different mechanics, separate minimal testing levels will improve your iteration speed. Having a level for stress testing is also crucial when developing for mobile VR.

- Organizers know their stuff. Package early, package often. We had a shipping build ready before the jam, packaged multiple times a day every day, and had no problems with packaging.

- Stay organized. Have a plan laid out as early as possible and put a large buffer in. Adjust daily and even more frequently near the deadline. Use version control, name your builds. Have team members with low-spec PCs? Set up a shared DDC and forget about compiling shaders ever again.

- No pressure — no fun

Get In SPACE no one can hear you CLICK

In SPACE no one can hear you CLICK

Surreal VR platformer inside of an RGB keyboard

| Status | Prototype |

| Author | hollowdilnik |

| Genre | Platformer |

| Tags | 3D Platformer, Oculus Quest, Surreal, Unreal Engine, Virtual Reality (VR) |

More posts

- AppLab launch | In SPACE no one can hear you CLICKJan 18, 2022

- Post-jam update: much better graphics and polishOct 15, 2021

Comments

Log in with itch.io to leave a comment.

Don’t have a VR headset? We’ve recorded a full playthrough! https://www.youtube.com/watch?v=AIxQ8ZUeU1M